Internet History in Brief

How did we get here?

Anyone who knows me well knows that I’ve been playing around with computers for a long while now. I was doing so even before I met most of the people I've now known for a long, long time. I was exposed to my first computer at the end of 1977. It was a crude, limited thing at the time but nonetheless fascinating to me.

I became interested and intrigued so for years afterwards computers slowly became one of my hobbies and even a sideline --- one that many looked upon as perplexing and puzzling and others even a bit of a waste-of-time and money. My generation was the last one to leave the school system before computers arrived on scene anywhere at all within standard education curriculums. We simply didn't have them. However, we did have big rooms of manual and semi-electric typewriters and were offered the wonderful chance of learning how to *type* in the grand tradition of 100 bygone years. I will always fondly remember my teacher Delmer and his stories.

Surprisingly, very many people over 15 years of age around 1995 or so hadn't ever had experience with any computer at all. Most hadn't any desire or use for one, didn’t see much purpose in them on a personal level, and certainly didn't envision ever getting one for themselves. In fact, they'd often defiantly and proudly say as much if asked.

In their defense, Personal Computers (PCs and related gear) were a whole lot more expensive then, than they are now. That was a factor. The odd few who had a few extra bucks burning a hole in their pockets and were curious enough to get one would more often than not toy with it for a few weeks, decide it wasn't their thing, then give up and eventually let it gather dust in the corner of some room, unused.

There were no GUI (graphic user interface) displays, no splashy programs and games... just stark, raw DOS interfaces with an ever spiraling depth of complex utilities and command phrases. Input was with a keyboard (no touchscreens, no voice commands) and even the 'mouse' was just a novel option in most cases for the few programs that could utilize it. Yup, computers were pretty much just 'geek' domain, in the old-school meaning of 'geek' (not a compliment back then)...

It was like that for a long time.

Then one day during the 1990s the Internet became available to the masses.

The common folk eventually became aware of it and its potential for access to information, ease of communication and the sheer joy of doing your banking, in your house! in your pajamas!!

BOOM. The world changed.

By now (2007) most everyone I know from the bygone days that viewed the computer as a strange nerdy, geeky device that served no real purpose now have one in their homes that they regularly use to surf the net and to communicate with.

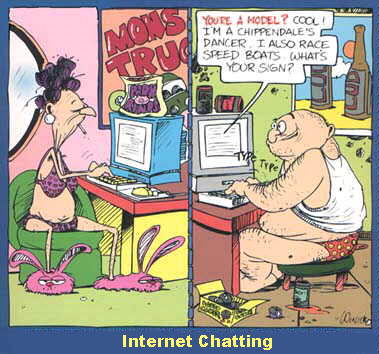

Some are hooked bad. Some even use them at work obsessively and when I see how regularly and possessed they seem as they race to them to once again check for a possible new email arrival within the last 1/2 hour or to browse a few more Web sites, I wonder what they ever did with all that extra company time before the Internet? Kids use computers a lot for school projects, online gaming and to carry on close, chatty relationships with their buddies. To the youngest, newest generation, computers have always been and are just a normal part of life for them.

What strikes me as a little bit unfair at times is how there sometimes seems to be a debasement of the Internet by a lot of people. They don't actually understand what the Internet really is but don't hesitate to rail against it anyway. I think they think it should be a huge sterile predictable piece of software from some huge multi billion dollar company. They should try to think of it more as a doorway into another place that is the sum total of all of us who participate within it. It is intrinsically an empty shell that is full of humanity at its best and worst and its in between.

What makes it a fascinating place to visit isn’t the predictability of it but rather the opposite of that. Without people the Internet wouldn’t be anything at all. It’s the people who have made it interesting and diverse. The extraordinary power of hundreds of millions of people communicating freely, sharing and being exposed to other ideas, places they would otherwise never know anything about. It is helping to create a worldwide community with gradually more and more commonality, awareness and hopefully/eventually, tolerance between its members than the patchy, isolated world this once was. Just like in the real world, we must journey through it with sound judgment and awareness that all those things that we should avoid or be leery of in the real world, can exist here as well in perhaps one form or another. In time, perhaps a system to shelter the more fragile of disposition among us will evolve but for now it's the wild, wild west.

From time to time, because they know of my longtime acquaintance with computers, I will be asked by people that I know, "What, exactly, is the Internet? Where did it come from? Who owns it? Who runs it?"

Man. That’s a huge story.

It’s an incredible progression of events and circumstances involving tons of people. Some of it was planned but some of it, thankfully, was just fluke.

Most of the story of it's creation early on revolves around mainly the building of the structure; that physical place or system of hardware and software the 'Net' lives within. These solid machines and wires you can grasp and squeeze but the 'Net'... you can't grab. It showed up a little later on, seemingly all on its own.

Like a carpenter who builds a solid house with all the trim, windows, sash and splash but it's the family that moves into it later and adds those things that only a family of people can, to make a house become a home.

It would almost appear that the first half of the Net was built by select individuals in society and the second half was built by the rest of the people in society.

Anyway, I think I can boil down this evolution well enough, however summarily, to give you an idea of its creation and hopefully won't be too long winded (...it may already be too late on that point):

Naturally the Internet could not exist without computers so you’d probably think that the history of the computer’s invention and development should be included first of all. I don’t think I want to get too much into that aspect. Suffices to say that man has been inventing ways to have machines perform his mundane, repetitive tasks for a long, long time.

A Frenchman back in the early 1800’s invented a weaving loom that was automatically controlled by punch cards. This could be a sort of computer I suppose. Or even before this guy, there was Pascal, who a computer programming language was named after. He invented a simple calculator that worked with gears and wheels back in the mid 1600s. It was a device that had inputs, a mechanical means of figuring out the total for you, and would output same.

Many, many other people came up with devices along these lines that helped with the mundane task of crunching big numbers over and over or did it faster than a person could. Other people developed the theories behind binary programming and the logic methods that later became the basis for how digital computers processed information and were programmed to handle operations.

All these experiments, successes, inventions, discoveries added to the human knowledge base that could be referenced by researchers as they endeavored to develop the computer machine concept even further and create more and more sophisticated devices.

However, it was after World War II and the beginnings of the ‘cold war’ period when computer advances really began to take off. Research demands money to sustain the people, facilities and materials needed to carry it out and up until then a lot of research was conducted privately or semi-privately through Universities and Institutes who didn’t have exceptionally deep pockets.

Around this time the U.S. Navy Department and Air Force Department decided that they needed a computerized, electronic defense system against the threat of Soviet bombers armed with nuclear weapons. Money to fund these projects suddenly became virtually limitless. From this came the development of huge 250 ton computers filling gigantic, air-conditioned rooms, each machine containing 50,000 to 60,000 vacuum tubes and took days and days to 'program'. These dinosaurs could perform multitudes of calculations in seconds in what used to take 50 or 100 mathematicians hours and hours before.

Would you believe that these initial R&D projects and subsequent creations cost the U.S. people a couple and a half billion dollars? Frankly, I always thought it would have been cheaper just to yank one of the computers out of one of the UFOs that crashed at Roswell and just make a copy of it. But I think the Japanese people were still a bit ticked off at the Americans at this time and weren't in the mood to make a knock off for them yet.

The problem in the early days of computer development came from the fact that different departments in the U.S. government were working independently on their different projects with their own separate budgets and separate teams of engineers. Results and progression of the project meant better chances of continued or increased funding so there tended to be a bit of secrecy and hoarding of information between the various groups. Little sharing of discoveries was made.

Then it happened. In 1957 the Soviets launched Sputnik into orbit and caught the world by surprise.

The Americans lost their minds for a while there and felt they were losing the race to be the planet’s superior technological giant and world power. It particularly hit home hard for the President at the time, Dwight Eisenhower. He perceived their success at being first to do this as a setback in his own performance as leader of his country. He knew how the military was, inside and out, and was aware of what was going on. In 1958 when he addressed the nation on the how things were, his State of the Union address, he made it clear that the country needed to strive to advance it’s technology for its own good and safety. He strongly suggested it certainly should abandon the little turf wars going on between departments.

As a result, Ike created ARPA (the Advanced Research Projects Agency) with a huge budget at its disposal. It was given the mandate of developing advanced military research and development involving anti-missile defense and space research and development. However, the space part was given to the new agency called NASA shortly after. Soon ARPA expanded its mission to include all sorts of spin-off projects that required sharp minds and intense study. They found a lot of these smart people at MIT and the University of Berkley in California. ARPA helped fund and supply huge time-sharing mainframe computer systems at these places as well as a place called SDC in Santa Monica. Here the ARPA funded researchers and engineers had access to the computation power of these huge mainframe computers to create and develop their projects and inventions on behalf of APRA.

I suppose the dawning of the idea of a network happened as an afterthought when the Director of the IPTO (Information Processing Techniques Office, an old military department that converted to be demilitarized) had a flash of insight that seems commonly simple in hindsight now. He had three terminals in his building, in a single room, that were connected to the three remote locations (as mentioned previously), miles from his building, where ARPA helped fund and support the huge time-sharing mainframe computers. This Director could log in on any one of these three terminals and be connected to the facility's mainframe computer that particular terminal was linked to. If he wanted to visit a different facility's computer, he'd log off the terminal he was on and hop over to the next terminal over and log in again, this time gaining access to the particular computer attached to this terminal instead. One day it struck him naturally that engineers should try to build a system that had only ONE terminal but was networked with the three different computer systems all at the same time! This way a person could log in on a single terminal and go anywhere they wanted between the three separate computer systems.

After a meeting between this Director and the Director of ARPA it was decided that having a world of computers, each their own little isolated island, made no sense and was counterproductive. So a big pile of money was immediately made available for a new endeavor. The world is fortunate these men had a flash of foresight and wisdom on this day and were in the mood to do something about it. Engineers were told to create a way to successfully network these three isolated computer systems so they could 'talk' to one another. With time-sharing on each system becoming an issue due to few computers and more and more demand from engineers to get time on them, it made sense to utilize these expensive, million dollar plus machines as efficiently as possible. There might be redundancy in information from computer to computer that a network would eliminate, potential free time on one computer could be utilized remotely by an engineer at a different location through a network login, there could be greater communication between the various project teams spread out between the locations, sharing of ideas, discoveries, faster progress, etc.

Before anything could be physically built, years were spent on developing the foundation and principles of how a networked system would work. So many computers in this era had completely incompatible systems from each other. Different companies would build computers using the parts they designed into them and even languages used to write the programs varied. Standards and standardization wasn't prominent yet. Only a few men out of the crowds of many had the vision of where computers could go and what purposes they could serve. Often it was a slow struggle just to envision what the next step in a process that hadn't ever existed, was. There were so many distractions to the engineers during the 60s. The race to the commitment to get a man to moon was in full tilt. There was Vietnam and the military demands for slicker technology to help with that police action. The whole U.S. country was involved in the turmoil of the civil rights movement and the tragic assassinations that would occur every few years. And the cold war still loomed as large as it ever had. It was a whirling, spinning time to try to remain focused and inventive in, that's for sure.

An engineer named Paul Baran might be credited for being an early visionary of the Internet. At least for devising the theory how it would actually function. Baran was part of a Californian ‘think-tank’ whose help was enlisted by military nuclear planners to develop and analyze different disastrous scenarios of catastrophic nuclear events and provide back their opinions and research of how these scenarios would play out. He can probably be credited with being one of the first ‘system analysts’ in the computer world.

The military and the President wanted a failsafe system of advanced communication between them and every missile silo, outpost and command center on the continent. Part of the findings of research by the ‘think-tank’ was that under a nuclear strike, a nationwide communications cable network between various silos and command posts throughout the country would be phenomenally expensive to create (it didn't exist at that time in the sophistication that the big bosses wanted) and probably impossible to prevent from being destroyed or disabled by a single or multiple missile strike. You have to remember that at this point in history, such systems were usually more or less centralized and usually single, direct routes were made to the necessary outposts beyond the central one.

Baran was given the task of trying to find a cheaper, more guaranteed way of communication between all parties that could withstand a nuclear attack and still remain perfectly functional.

After much thought and contemplation he envisioned a digital network consisting of a non-centralized system of distributed nodes each with redundant interconnections to other nodes, around them in their immediate vicinity, thereby creating a multitude of random routes for communications to travel from node to node and ultimately from source to destination. A node is simply a relay station. In this case it would consist of a computer that could receive a digital message and then relay it along to any one of 3 or 4 or 5 other nodes/relay stations connected to it. Which node the message or information was relayed to would depend on their condition, capacity and availability at the time.

He visualized this system to look kind of like a gigantic stylized fishnet laying across the entire country from coast to coast. Each intersection of the net would be a node or relay station with a specific computer address. From each of these nodes would be 3 or 4 or 5 connections to the same number of nodes/relay stations surrounding it and so on. If you sent a transmission from a source node in Washington D.C. for example, on the outer edge of the net, it had a choice of many, many paths it could take from relay station to relay station as it made its way across the country. If a nuclear strike took out an entire section of the net, the relaying of the message simply continued on the many remaining alternative routes around the damaged section of the network using the paths that were still intact and the relay stations/nodes that were still standing.

He also proposed that any message, no matter what size it was, would be broken into uniform, small packets or pieces. These packets could then be sent out into the network, one immediately after the other, and each find their own, quickest path to their final destination along the multitude of path choices. Algorithms programmed into the node computers would determine at any given time what the most efficient route to the final destination would be, depending on current traffic on the transmission lines, etc. and send its packet along to the appropriate next node of choice. In this way the bandwidth required to move digital information quickly could be kept low and cheap. The system would simply re-assemble the packets at the final destination computer and present the information in its original form.

Thus Baran’s design produced the basis for how the Internet functions today even though his intention was to create a survivable communications network in the event of war. But what sprang forth from his efforts was a highly efficient and non-taxing, proposed system of moving data. Today a person in Toronto accessing a Web site on an Internet service provider in Mexico might retrieve that information over a satellite link or through the ‘land-lines’ across the continental U.S. They may even get it by bouncing from satellite to ground, back and forth, the entire other way around the world, through Asia and the Pacific. It all depends how congested or busy any particular route is at a given time. This is Baran’s system; the systematic, incredibly high speed passing of packet after packet by the thousands of nodes along a maze of interconnected pathways until the destination address matches. With the speed of electricity and fiber optics these days, a fly around the world takes a second if not less.

But, getting back to the story, it was proposed that a system like this could utilize the existing telephone transmission lines and satellite network already established and in place. This phone system was very comprehensively distributed throughout the continent already if not the entire world! At this time AT&T (Ma Bell) was basically the regulated monopolistic caretaker of the entire system in the U.S. A major drawback was that it was an analog system. Everything about it, all that money invested in it, was centered exclusively around only analog technology.

Baran’s ideas were based on a much quicker, more efficient, digital system of technology. Using digital computers in any form of high volume in the movement of data, with the phone system as it existed at that time, would tie up considerable phone system resources if not completely disable entire branches of it with overcapacity. Not to mention that an analog system is highly prone to system failure in the event of a few ‘well placed’ bombs.

Analog signals are subject to deterioration over long distances, are affected by electromagnetic forces, and are always mixed in with varying degrees of ‘noise’ (many other ‘garbage’ frequencies supplemental to the actual wanted signal). It was discovered that digital information relayed by a computer was done is short, random bursts with a lot of ‘dead air’ between them. If the circuit-switching of the phone system could be computerized and made digital, dedicated phone lines wouldn’t have to be tied up. Instead a line could simply become active just before the burst of digital information was sent down it and then cut off and become available for some other stream of information from somewhere else right after the burst was finished. The computers, even in the mid 1960s, were faster than the analog electronic switches of the phone system at that time. So there was no chance of creating a system of high speed on and off switching between different data surges. Basically an analog phone line would be wide open from end to end and would only close at the end of the complete transmission, vulnerable the entire time and unavailable for anything else to use it. The number of route options starts to decline exponentially with this system.

The system as conceived by Baran was presented to corporate AT&T. It basically suggested they would have to scrap their analog equipment and install yet to be invented digital technology that would operate in an entirely different and more efficient manner in this bold new approach to the future of communications. The leaders of the company were men of their time. This new talk of computers and networking was a lot of theory at the time and even though its easy to see it now, at the time it was hard to convince them that such changes were inevitable anyway and worth the considerable expense. They adamantly rejected all ideas of change or investing the huge sums of money it would require to convert their systems.

However, in AT&Ts defense, their laboratories (Bell Labs) did invent the transistor as a device to boost the analog signals in their voice transmissions in order to keep the signals strong and above the detrimental noise level as they traveled to their destination. The invention of this electronic component created the revolution in electronics that snowballed and soon created more efficient ‘solid state’ products (no more tubes) and eventually lead to the creation of the integrated circuits that comprise the ‘brains’ of computers today. They also invented the UNIX operating system. It was the most ‘bullet proof’, widely used system in the networking world for years and years and still greatly in use today.

So the first networks were destined to be interconnected by an analog system (existing phone lines). But in the following years the technology in the phone system was gradually and rightfully converted to a digital format and the increased efficiency and possible bandwidth was realized.

In the late sixties, when the first networking of the mainframe computers at the different Universities and Institutes wasn’t yet a reality and still being proposed, an argument arose from the guys who ran these various host machines. They complained that their computers had scarce resources as it was, with all their time-sharing requirements. They argued that a network system added to their computers would demand a big chunk of what was already slim pickings for processing capacity, just to run the network functions of sending and receiving data, checking for errors in those transmissions, routing the information, verifying its arrival, etc.

ARPA engineers listened to them and as a result elaborated the design of the proposed network to then include ‘interface message processors’, IMPs for short. These would be smaller, intermediate computers installed at each mainframe or host computer. These IMPs would specifically handle all the networking tasks and would communicate the transferred information to and from their hosts. This would take the burden off the already overtaxed mainframe processors and also allow the project to continue to its conclusion without further conflict with the operators and part owners of the host computers. (Kind of the first version of a 'router' for you readers with knowledge about modern network systems).

What is interesting at this point is that because ARPA created, provided and owned these IMPs and therefore the network portion of the system, as it now was designed, the net could not exist without them. ARPA in effect controlled the net and I suppose could arbitrarily just as easily shut it down as keep it up. Interesting concept when you consider that when you jump ahead into the future 35 years from then, the Internet has a totally different perception now of no one actually owning it, or having that power over it and few able to legislate it in very many ways. Even if you destroyed a huge section of it now, the remaining section would either continue on as normal or at most, very quickly recover a setback in function in any case.

Brand new software was developed and created enabling network users to log into a remote machine. File transfer protocols were created. The former was called ‘TELNET’ and though it was improved over the years, it remained as the 'backbone' server-to-host network program. It is, in a way, the great-great granddad of your Internet browser today. What was most significant in the development of these programs was that for the first time many technical minds from all over the U.S. came together to work on them, agree upon and establish standards on how these network tasks and protocols should be written. Everything discovered and produced was made available to the public domain. It was the first ‘Open Source Code’ concept. In the following years this concept would be used by other people in developing refined, debugged and resilient software such as ‘Linux’, BBS software, etc.

What is a protocol?

It is a program or collection of computer code that allows two different computer systems to talk to and understand each other.Think of it this way:

Two Ambassadors from two different countries meet in a room. They speak two different languages and one has a gift he wishes to give to the other. He walks up to him, extends his hand and says, "Here's a gift for you." The other guy looks back and says, in his own language, "What's he saying? What does he want?" Just then an interpreter walks up between them, who knows how to speak both languages. He says to this other guy in his own language, "Oh, he says he would just like you to accept this gift he has for you." The Ambassador now understands and accepts the gift.The interpreter here is roughly what a protocol is. It enables the 'shaking of hands' between two computers and creates mutual understanding so data can be passed between them. Sorry for the interruption but I hate it when I read an article that mentions something regularly as if everyone in the world knows what it is and I'm one of the ones who doesn't.

Finally the network between two separately located mainframe computers neared its completion in September, 1969 when the 1st IMP was finally finished and shipped to the computer room at UCLA in California. A month later the 2nd IMP was completed and shipped to Stanford Research Institute. Both were wired to their respective host computers. This basically created a two-node network, the simplest form. The link between the two IMPs was established through an ordinary telephone line. Computer data was sent between the two computers, as well as voice conferencing between the two computer operators, on the same phone line. UCLA proceeded to log into the SRI host computer via the telephone line. UCLA had to type the word ‘login’ to carry this out. They typed the ‘L’ and SRI reported back by voice that indeed an ‘L’ had transmitted and had keyed itself into SRI’s computer on his end. Then UCLA typed the ‘O’. Once again SRI reported back that the ‘O’ successfully traveled over. Then UCLA typed the ‘G’ and for some reason a bug occurred and at that instant the SRI host computer crashed!

So much for the first Internet experience of the brand new ARPANET, as it was now called.

However, the minor crash bugs were soon corrected and the first live and for real Internet experience had occurred in the world!

One of the early common uses of the ARPANET was as a mail device. But it wasn’t ever an official aspect of the network project. The stand-alone time-sharing mainframe systems in the Universities and Tech Institutes had always had little mail systems where users could send and retrieve messages but I don’t think of this technically as the email that we now know. It wasn’t between another machine, in another location. It was only between users sharing time on the same computer. It had been this way through most of the 1960s.

In the very beginning, this new ARPANET could be used by the techies and engineers to run programs remotely on a distance machine or transfer data files between the various locations. These would be computer code files, small compiled programs, etc., all mostly computer related and work related. But in 1970 a sharp MIT graduate named Tomlinson modified the file transfer protocol. This was the program used to transfer data files between two networked computers. Remember, it was ‘Open Source Code’, in the public domain and free for anyone to access and modify for their own purposes. He fashioned it into a small program that could send text messages across the ARPANET to a mailbox on another machine at a different location. This had never been done before.

It was the birth of real email.

He sent himself emails to a mailbox on a distant computer. I don’t know what he said in his first email. Maybe ‘Come here Watson?’.

What is a bit funny was that when Tomlinson’s buddies found out what he had created he was a bit embarrassed and even fearful. It is said that he said something like, "Shhhhh! Don’t tell the big guys, I know this isn’t the important things I should be working on…"

Tomlinson is also responsible for the use of the @ symbol we see in email addresses now. While he was writing his email program and protocols he discovered he needed a character to separate the recipient of the email’s computer name and the network-ID of the computer their mailbox was located on. Looking down at his keyboard, across all the different characters on it, he soon picked what he considered the best choice. The @ represented ‘at’ and he reasoned that ‘that person was 'at' that mail box’. It would never be a character within someone’s name or a computer’s identification tag. It was a perfect choice.

A majority of people soon became surprised and dumbfounded as the 70’s went by and they watched as email on the ARPANET became, above and beyond, the biggest component of its usage. Here was this incredibly expensive, government-funded system and it mostly was just a message writing machine? And the shocking thing was a lot of the messages had nothing to do with computers!

This really was part of the beginning of the communication and information age. No one foresaw it or planned it. A ‘revolution’, whose time had finally come, came naturally, created by the people using the system day-to-day, not by the people who developed it.

In 1973 another node was added to the ARPANET but for the first time this one was overseas in Britain at Sussex University. That was significant enough but another smaller, interesting thing happened. Using a small split screen program named 'TALK' the guy in Britain was able to type real time messages on his screen to a guy in Los Angeles who could instantly type back his reply. This was an early example of one of the first online ‘chat rooms’ I suppose. The precursor to text messaging.

ARPANET grew and added more nodes but because it was still under the wing of the U.S. Department of Defense, participants had to adhere to tight rules and configurations. Membership into this special ‘club’ was very expensive and difficult to attain at that time. The ARPANET shut out more computer people than it let in and the computer society knew it.

Partly because of this, at this same time, all over the world (including the U.S.), other people were creating independent little networks, privately and outside the jurisdiction of ARPA. There was a radio transmission based system on the Hawaiian Islands that was appropriately called ALOHA. In France a network they called Cyclades was built. The British had a system. The U.S.S.R. had networked a bunch of military computers. There were networks in Italy and Sweden too.

Then a member of ARPA named Bob Kahn became aware of all these networks scattered everywhere and became obsessed with the idea of devising a way in which all of them and ARPANET could be interlinked into a huge, single supernetwork. He arranged a meeting of representatives from all these different world networks and gathered them in one place to discuss these possibilities and develop a plan of attack. Together, they formed the International Network Working Group (INWG). Over the next few years a quest to develop a method of interconnecting all these different styles of networks was undertaken.

Intensive work was done to develop a new idea of a system of 'gateways' (later called 'routers') that would allow this conglomerate of different-from-each-other networks to retain their original characteristics but still allow the seamless flow of packet traffic through and within each network. Kahn called this his 'internetworking' project and he partnered up with an odd, but brilliant guy named Vinton Cerf. Cerf is sometimes credited for being the 'father of the Internet' (though he and Kahn were 50/50 partners in the effort), because of the protocols and systems he developed to make the interconnection of this rag tag collection a reality.

The network in Hawaii, around this time, caught the attention of the U.S. military because of its use of radio waves to connect its various locations instead of landlines. This quickly led to the Department of Defense’s development of its satellite based worldwide military network called SATNET.

To further tweak the worldwide network an effort in standardization of and upgrade to the protocols that moved information around was called for by the Defense Department in the early 80s to provide greater security for packet flow though subnetwork to slightly different subnetwork. Upon its completion the use of this new protocol was more or less 'greatly encouraged' to all the participants. It became known as simply the ‘Transmission Control Protocol and Internet Protocol’. We know it today as TCP/IP. By 1983 everyone had pretty much consolidated in using this protocol exclusively and all other, now obsolete ones, were dropped from the system.

The TCP/IP protocol in fact was a suite of a hundred or more detailed protocols but they all became known by this single generic term.

Once things were working smoothly a demonstration was carried out for military officials (mostly because they had provided the funding for the projects the past 20 years or more) to show the potential of this new Internet. Using a specially designed program for the occasion, a packet of information was sent from inside a mobile vehicle located on a highway in northern California intended for another location in a building only a couple hundred miles south. But instead of making it travel directly there, the program forced the data packet to travel the long route. Off it went, first using packet radio transmission, then making its way across the continent through the ARPANET, jumping up to space through satellite transmissions, zipping along phone lines thru the myriad of subnetworks part of the system in Europe and Asia, back up to a satellite to fire across the Pacific Ocean, bouncing off Hawaii. Finally it reached it's destination at the southern California mainframe. And it had taken only a couple seconds and not a drop of data was lost. The planet's Internet had arrived.

In around 1985 or so, another development was the integration of TCP/IP into the UNIX operating system program that the majority of computers by then were using to run their networks on. This was a big step as it finally established a much needed, universal, standardized, solid program platform that the network could base itself on. UNIX became even more popular as the software of choice.

UNIX was also nice and cheap at that time and Bell Labs included the source code! They also gave anyone permission to alter it as needed and to share these changes with others. This was why it was preferred and adapted well to all the minicomputers that were quickly starting to show up at the Universities around the country. There probably wasn’t a single University that didn’t run their systems on UNIX.

UNIX is one of the factors responsible for the growth of the Internet into more of the ‘people’s system’.

The ARPANET at that time, how do I say this delicately, was elitist? Ok, let’s just say it, it was a regular snobby person’s club.

A lot of University’s by now had computer labs with their new, less expensive minicomputers in them, running UNIX software. The ever-growing computer science departments soon offered this new computer thing as a real academic discipline, an offshoot of electric engineering with a generous helping of computer science gave you computer engineering. UNIX’s ‘C’ code could be pulled apart, applications could be created to run with it, and real time testing and sharing of programming efforts could be done. It was an incredible real life teaching and learning tool.

Because of UNIX widespread use an Association of UNIX users was created in the early 1980’s. There were members in it from all across the continent and in Europe sharing their common bond of being UNIX users, teachers and students. Newsletters were commonly sent around keeping everyone up to date on what others were doing, advances, highlights, etc. The membership grew and it got increasingly harder to keep coverage thorough.

Early on a conference was held and a new innovation was announced to the members. Because a majority of UNIX computer enthusiasts had been kind of shut out from the ARPANET but still yearned for the network experience and the benefits it could bring in sharing information and communication, a system was designed that would come to be known as sort of a ‘poor man’s’ ARPANET. They named it 'USENET'.

It was a dialup client-server system. The main feature of it was a group of online articles controlled, created and perpetuated by the users themselves.

A user on the University lab’s computer logged into the USENET computer, at some other location, that stored the USENET articles. The category of these articles was initially to do with UNIX stuff. You’d read about people reporting bug fixes, or reporting troubles they were experiencing with the software and asking for suggestions, and even the odd one showing up having a random piece of computer hardware for sale. You could read the articles, post some of your own comments or questions, reply to a writer of an article by sending them an email that was stored in a mailbox system on the USENET system or reply right in the forum, etc. Universities and big businesses with computer systems (i.e.Bell Labs) from all over logged into this system; it was open to anyone (running UNIX of course).

Soon, as traffic increased, more UNIX article categories began to appear. For example a dedicated buy/sell group appeared. A group for only bug fixes. A group for general questions. And others.

The system was designed so a user could log in, decide which group or groups they wished to visit and then go inside them and read, write, interact.

Anyone was welcome.

Eventually word of the USENET reached the University of Berkley.

Big difference here was that Berkley was also a member of the ARPANET.

Some innovative, open-minded students there decided to integrate the ARPANET’s existing discussion groups into the USENET groups. However ARPANET groups were generally set up according to user, who created a discussion and then decided who they would include in that discussion. USENET, on the other hand, was much more democratic in character and would allow anyone to create a discussion article and anyone else could join in on the discussion and post opinions or leave it alone if they wanted. This USENET style of system appealed to more people, was soon adopted as the standard and prevailed. It is the format the Internet still uses to this day.

Because the interactive articles became more and more popular with ARPANET users, is was soon decided to integrate the USENET, as an application, right into the ARPANET system full time. It became a permanent fixture there and therefore of the Internet as well. Soon other categories of groups were created. There were forums for discussion about politics, recreational things, anything for sale, general discussion, technical groups, and controversial discussion groups. People would often just go into groups to browse and read the varied and entertaining discussions and articles of other people but never contribute anything themselves. The term ‘lurker’ was coined to describe people like this.

On the Internet today, it is still called Usenet Newsgroups and what was first intentioned to be a helpful service for UNIX users, between UNIX users, eventually became a world wide open forum of discussion on just about any topic you can imagine and continues to grow every day. Surprisingly, I find a lot of people who arrived on the Internet scene much later on or during recent times aren’t even aware of this area of the modern Internet.

Most of the major Internet software providers include a newsgroup reader in their package. There’s one in Outlook Express and Netscape but there are also online readers that you can access on the Internet with your regular browser software and browse the newsgroups without the need for specialized readers.

The Internet metamorphosis has so many stories and paths I find it difficult to decide which ones to relate certainly and which ones to possibly not, in a summary of the events. I believe it’s important at this time to bring up the aspect of the ‘great unwashed’ getting into the scene since it is ‘we’ who finally inherit the Internet when it finally slides into the general public domain in the end.

In my telling of the story up to this point I’ve been mostly writing about a group of what might be viewed as privileged people. I’ve been writing about engineers, technicians, lofty government officials, corporate funded researchers and university students all with access to million dollar computer systems and networks. State of the art for the time. They were let loose among the technology to create something and to their credit, by and large, they did us proud.

But during the mid 1970s another quiet revolution began to foam and stew. It was during this time of mainframes and University minicomputers and multi-million dollar budgets that a number of smaller companies began to market this new computer technology in an infinitely smaller capacity in the form of the ‘home’ or ‘personal’ computer (the PC). These simple, comparatively primitive devices were a computer an everyday person off the street could purchase, take home and experiment with. The first ones didn't even have monitors, they had to be wired to the back of your TV set!

The future arrived in the form of a simple ‘Popular Mechanics’ magazine ad proclaiming the features and virtues of a lowly, simplistic computer called the Altair. Many of the lucky ones who could ‘play computer’ on huge DEC minicomputers and such simply laughed at the little Altair. But others saw in it some sort of potential. One of these guys was a man who dropped out of Harvard when he discovered this thing, bought one and soon created a business around the possibilities of this new device. I think they called him William Gates.

Around this time there were lots of people with vision like Gates. They saw the incredible potential in PCs and many of them seized the moment and went on to create the multitudes of enterprises that spurred along the entire chain of events, all at a speed never seen before in world history.

As more and more people bought different little computers being offered by various companies (the Commodore, the Apple, the TRS-80, IBM-XT) some of them were relentless to continually search out new things to do with them. Others didn’t have the same ability or drive to push the limits of their new computers but they were still very much enthusiastic about the whole mystique, culture and draw that these devices had and would tinker with them as much as their abilities would allow. A 'computer culture' (as it came to be known) of PC innovators and users/testers of those innovations was born.

The ARPANET and its new ever-expanding Internet connections may as well have been a billion miles away for most people on the street. Those domains were unreachable and mostly unheard of or only in rumours at the time.

A guy named Christensen and a friend of his, also with a PC, had purchased a couple of new devices for their PCs called modems that had just come onto the market. This was late 1970s and modems at this time were acoustic coupling devices plugged into the serial port of the computer. Once installed, you dialed the other computer’s number and then laid the phone handset in the cradle of the thing and let the two computers whistle and squeal away at each other through the speakers as things happened on both screens and in both machines.

Christensen wanted to write a program to enable the transfer of files between him and his friend’s computer with these modems. He did and had some success with his efforts but the large margin of error with such a variable and dirty type of signal through an acoustic coupler became obvious when he transferred programs and they wouldn’t run properly because the transfer had corrupted them. One byte of data translated wrong could be enough, in some cases, to totally disable a program. This caused him grief and made him refine his efforts until he had a better protocol he named XMODEM, an error-correcting program to complete error free transfers. Soon this became the predominate protocol for serial transmissions between PCs. Acoustic couplers were replaced with digital modems that sent far superior signals.

Later he was restless again and got to thinking. He created an even better program for his computer. It was what he called a ‘Computer Bulletin Board System’ or CBBS. It was a message storage, forwarding and retrieval system. His buddy could dial Christensen's phone number, log into his computer and write, read, and answer messages. He advertised his new creation in a popular trade magazine and told people how to do it and how it worked. Soon people were dialing into his BBS to try it out. Computer people loved it and it caught on. Others set up their own BBSs.

Soon people starting writing their own versions of BBS software with more and more features. It snowballed and after a few years the BBS community numbered in the tens of thousands with hundreds of little stand alone BBS systems throughout the continent.

If you knew the telephone number, had communication software and a modem on your computer you could dial into one, sign in and do a number of things once ‘inside’. There was always a long list of free, public domain and 'shareware' software and little computer utility programs you could pick from and download back to your own computer. Little programs that did fancy, neat little things on your machine like display the time, defragment your hard drive, change your font size on your screen, on and on. (There was a huge community of amateur and professional programmers constantly writing programs that they shared with the world through BBSs). There would be at least one discussion forum that you could go into to browse the many messages or leave your own comments and get in on the discussion. The BBS discussion forums functioned very much the same way that Usenet Newgroups functioned on the Internet.

Sometimes, the operator of the BBS would post advertisements or screens of information you could view just as you would if you were standing beside a bulletin board in some school hallway (hence the name). There might be a list of all the other BBSs in your immediate area, rules of the place, an important announcement, or whatever.

Once people knew that you were a new member of that particular BBS it was possible you could receive private electronic messages and these would be waiting for you when you arrived again, later on, onto the site. Especially if you commented in a very negative way against someone’s message in the discussion group during a previous log in *grin*. You could of course reply to them or send your own message out to other members as well.

A fascinating characteristic of most electronic mail and messages is the style of language, grammar and punctuation that developed right from the onset. It is a narrative, conversational style. The person writes the way people talk to each other in person, not the way schools and society normally expects a written document to be structured. It's a flowing, smooth style often mirroring the long stream of thoughts in the author's mind. The important thing is typing out what you want to say and not worry at all if it follows known conventions in writing.

Emotions a person is feeling are often interspersed throughout the message using a whole 'vocabulary' of characters, acronyms and (I'll call it) 'webspeak', invented by the people in the environment over time. You might see a sentence like, "You're a nut!". By itself it may be hard to interpret if the writer meant it in jest or not. But if they put a little sideways smiling face like this

: )

after it, the reader knows it's just in fun. But if you saw

: (

maybe that writer isn't sure you're 'all there' or is sorry for you.

>: (

has a frowning face with furrowed eyebrows showing the writer is annoyed or angry. Or they may just pop a little emotion in the line like I did in the paragraph two up from here, to help the reader 'hear' how it would be spoken and it's intention. Acronyms like LOL signify 'Laughing Out Loud' and there are many different ones that have come to be used commonly. All in all it was another unplanned or expected development.

BBSs were strictly dialup server-client systems. Usually when you were logged on and doing your thing, now one else could. But the BBS operator could check if you overstayed your welcome. These operators were called Sysops (system operators) and they were mostly a strict, domineering bunch who jealously monitored their own 'kingdoms' and would not suffer abusive participants in their particular community of users. The BBS software clocked your coming and going, how long you hung around, if you participated in the forums, how much you downloaded, how much you uploaded (uploading files to the site and contributing to its store of available free software was greatly appreciated). Sysops could read all your personal mail and doubtless regularly did.

As time went on, BBS software became more sophisticated and a sysop could have multiple phone lines into his system with multiple modems so two or more people could be logged into the BBS at one time, on different phone lines. This usually presented another feature on the BBS of being able to type chat messages back and forth between you and other users online at the same time. Hardly anyone ever did though because it would be seen as needlessly wasting valuable online time.

In those days these sites were all text based with screens of menu options to make your selections from. These were the days of the MS-DOS or IBM DOS operating system and graphics weren’t a part of most programs of any kind. Commands were typed in from memory at the command prompt and programs did what they were intended to do without much flash and pizzazz. The spice of life then was color. The more flamboyant sites might have a BBS software that allowed the sysop to add colored borders around things, or highlight menu choices in a bright shade of color different from the color of the rest of the text and so on. Graphics, if they did exist, were blocky creations made from the outer extremes of the ASCII character table producing displays only similar to what they were intended to represent.

Some of the discussion forums on certain BBSs would become huge, popular communities of discussion becoming so large that if you stayed hooked up via phone line and read everything it would take hours. That would make you appear to be a time glutton in the eyes of the sysop who you strived to keep happy. As a result of this universal pressure felt by all users out there, people wrote programs like SLMR (called Slimer) that would allow you to log in, quickly download all new messages in a huge discussion forum, then log off. Now offline, you could then fire up Slimer and read the entire forum of new messages fast or slow to your hearts content, answer them, post your own fresh comments about something and so on. The next time you logged on to that BBS, you uploaded your work and your replies and fresh stuff was picked up and integrated right into the real time discussion forum as it you had done your work in there.

Offline readers were a nice software feature that developed in the BBS world necessitated by the nature of that environment that couldn't allow unlimited users to be online on the same BBS at once. But, as we've seen when discussing the intentions of the original developers, it was always a basic function in how the Internet interacts with you and it's information. Rarely are you ever really 'on' the Internet but rather you are reading or looking at things that have been downloaded to your desktop computer, into the working area of whatever software you are using at the time (email, browsing, ftp, etc.). Your interactions may cause a quick upload of data from you to some Internet server somewhere in the world or cause a further download from it but it happens so quickly, most of the time that you are attached to your Internet Service Provider (ISP) you are actually just sitting idle on the very edge of the Internet world.

Anyway, the BBS thing was all very low tech. Anyone could easily set one up if they wanted to. All you needed was a PC, a slow modem, a phone line, some free BBS software, and maybe a few free public domain files to entice your users. You loaded the software, gave your BBS a name, plugged in the modem to the phone jack, advertised your BBS name and phone number on lots of other existing BBSs and then just sat back and waited for people to log in. When they did, and they always did, suddenly you were a king of your own little online kingdom.

Like in the real world, these isolated islands of interaction would either become the favorite place of many to visit or they wouldn’t ever fully take off and be so infrequently logged into that they would soon disappear from the lists. BBS sites came and went with frequency but a very few held a strong presence for years and years.

Then another innovation occurred. A man named Jennings had an idea of using BBS technology and creating a network from it similar to a 'mini' Internet. He was a programmer so he sat down and in a while created a system he named FIDO. It was a BBS in every sense and he fired it up in 1983 in California where he lived. Except it had a big difference attached to it.

The next year he developed an association with a guy in Baltimore and set up a second FIDO site in Baltimore. It too was a bona fide BSS site soon with its own group of dedicated users.

By the end of 1986 there were over a thousand BBS sysops using FIDO for their site. Some were in Europe. Each one was a part (a node) of Jennings’ unique network.

Here's how is worked:

What he had created was a sort of ‘accumulative, store and forward along’ type of network. Jennings envisioned it to be basically a zero cost, long distance network. People would dial into any one of the FIDO BBS sites, locally in their area, just like it was any other BBS site. They’d be able to participate in the many different categories of discussion groups in there. Now let’s say, for example, Jill in Chicago responded to a message posted from a guy named Bill in Vancouver in some discussion group about motorbikes. Jill types her comment to Bill then logs off and goes to bed.

Later, in the middle of the night, the FIDO site she had visited that evening automatically dials up a neighboring FIDO site, usually close by or within minimal long distance charge range. It quickly dumps all the fresh activity from its message groups from that day into the other computer as well as any private messages that might have been sent to the mailroom of that particular FIDO location and mail that has to be passed along to another FIDO site down the line. The other computer also reciprocates and exchanges any new activity and email it may have gotten that day as well. If the mail is addressed to that site it will stay in its forums or mail room, otherwise it will be forwarded on. Then they log off.

All the FIDO sites (nodes) have been programmed to phone around in this way, in a unique pattern according to the way Jennings has managed it all. There are also a variety of sophisticated routines of updating through sites set up as 'hubs' in their areas. They receive the messages in bulk for their entire metro area and then later distribute them throughout a large city and countryside to the other local FIDO sites close to them saving the delivering node from having to call each site from further away than that hub is from them. Later on, the FIDOnet even had a way of using the Internet to relay its bundles of daily data around its network.

Anyway, the next day, the other person, Bill in Vancouver, logs into his local FIDO BBS site (local phone call) and starts looking through the message groups he likes to browse and participate in. There he finds a response to his comments from a person named Jill in Chicago of all places. Bill, at this point, could reply to the message in the discussion forum or even send Jill a private message to her Chicago BBS mailbox if he wanted.

The whole nighttime routine of updating the entire network went on every day, without fail, all year long.

What you have as a result is a very effective, albeit slightly delayed, network. So throughout the 80s and at least half way into the 90s, people had a choice if they wanted to participate in just a popular local, standalone BBS community or they could visit a local FIDO site, and participate in an even larger community that stretched into Europe and all over North America. Or they could do both! The FIDOnet was still going strong even when I last visited a FIDO site way back in 1999 even though the Internet we all know now was by then going strong as well. FIDO had a huge community of devoted users but I doubt it exists any longer.

The BBS community was a snapshot of how the Internet was destined to be. People representing every type of conversation and opinion. There were rotters, there were good ones, there were lurkers, there were the salesmen, the buyers, the sneaky, the honest, the good, the bad, the ugly. The only difference between then and now is that BBS sites had the ever watchful Sysops who would quickly dispense justice if a user became too belligerent or distasteful. The Internet, on the other hand, doesn't have this 'big brother' mentality.

And I seem to remember it was called 'mail' or 'messages'. I don't believe 'email' became a word until the onset of the Internet in the mid 90s...

Anyway, the point of all of this section is that if the general public hadn't taken such an enthusiastic shine to the personal computer since it popped up in the 1970s it is speculative whether the industry's response to the consumer demands of faster and faster machines, more memory capacity, better screens, better software, would have happened as it did. It seems that PCs, the bandwidth, the speeds of processors all matched the growth of the Internet perfectly until at just the right time these two worlds collided and produced the most remarkable system.

Let me try to finish this story now.

By 1990 the Internet was nearly fleshed out to how we now know it but the most major feature of all was still absent, yet to be conceived.

There was no WWW, no World Wide Web! This is the graphical interface we all most associate the Internet with. That window in our browser to a virtual cyber world in which we click and scroll and roam all over the Internet with.

Before it was created, accessing the Internet was a lot like using old MS-DOS or UNIX based computer terminals. There were no graphics staring back at you from the drab, completely text based menu systems.

There were no Web pages but email existed, newsgroups existed. If you knew the commands you could visit other computer sites but there wasn’t much to see when you got there. You could get and transfer files around and run text based applications. That is if you knew how to find them and knew the commands to work with them. It was a world for the geeks and computer nerds who liked it that way and could use it just fine.

A man named Berners-Lee changed this. He envisioned information being held on servers. A user’s client program (browser) on another networked computer could access these servers and take what info it wanted but view it through a window like it's own little virtual world inside the Internet. The browser window wouldn’t be just a passive screen you looked at but a medium you could interact with and use to move around the Internet and get tasks done.

More protocols had to be invented for this new application, he had in mind, to work. He needed a protocol that would enable the user's computer to find the server that was holding the documents it was looking for. He created the Hypertext Transport Protocol or HTTP. Look familiar?

Then a universal way to display and structure the documents the browsers would seek out and load from each server site had to be created as well. This resulted in the creation of the Hypertext Mark-up Language or HTML.

It took over a year to work everything out and create it all but finally it worked and this new ‘World Wide Web’, as he called it, came to life on the Internet in early 1991.

The first browser was made available for anyone to download, for free of course. The new medium was advertised as active and ready for anyone to try out, on many newsgroups on the Internet.

Two years later there were approximately 50 servers devoted to the 'WWW' or 'Web' but compared to file transferring, email, newsgroups and many other applications, this new application on the Internet wasn’t very popular at all. It was thought of as just one more thing on Net among many. This was probably because all the users of the Internet at that time had been there for a long time by then and were used to, and good at, using all the text based applications and typing in commands. Plus the 'Web pages' (as they came to be known) that a person could bring up from the Web servers with this new browser software were very drab things with hyperlinks on them to text pages of bland documents or pages of navigation links to other places or applications that users could get to as easily just typing them into the traditional menus.

There were no graphics, no colors.

Then everything changed.

A young guy named Andreesen, who grew up in the PC world noticing the popularity of the point-and-click graphic environment of Mac computers, had a boring job at a supercomputer company that was a member of the Internet. He thought it would be an interesting thing to create a similar look and functionality for the Web browser he had tried out but had found to be very plain and unexciting. Probably due to the fact that he was a very young man, immersed in the PC culture with colors, graphics, pop and dazzle everywhere, his feeling that a bit of flash to modernize such a monotonous, bland landscape such as the Net, was a natural one.

In January 1993 he finished and made available his own version of a Web browser he called Mosaic. He also modified the HTM language (HTML) to handle graphics and colors! The really special thing was that his new Mosaic browser could interpret and display these images and colors. He offered it all for free to the Internet public and notified everyone on a few Usenet newsgroups.

The user response was wild. People loved it. The Web went a little crazy. People discovered how easy it was to create HTML documents and put them up on Web servers and exponentially the user numbers increased. So did the number of servers and the information people were posting on their new Web sites. Everything became more and more at an astonishing rate.

In 1994 Andreesen teamed up with a partner, formed a little company with him. They abandoned Mosaic and rewrote a new browser ground-up with greater features and enhanced capabilities especially programmed with the PC in mind. They called it Netscape Navigator 1.0.

Commercial businesses and the public went wild for it. And a snowballing effect caused a frenzy as more people got online and into the Web. More and more Web sites began popping up on servers everywhere that showed off people's information or products on pages to whoever wanted to see them.

The Web was beginning to get so big that it spurred on other innovative people to develop Web applications they called 'search engines' to help people easily search for and find the sites and information they were seeking. 'Alta Vista' was an early popular one but you probably know of the most popular one these days, 'Google'. So popular, in fact, that if someone can't find something, people now will often advise them to "Google it!"

Navigator 2.0 was a new version of the browser put out that also enabled users to send and receive network email and a reader to participate in the huge repository of Usenet newsgroups. Many companies liked the new email feature as they started to include email as a regular and important part of their ability to communicate with their customers and suppliers.

(Later, when Netscape Communications Inc. went public on the stock market, by the end of the first day of trading 21 year old Andreesen was worth $58million. The commercial world was beginning to smell blood in the water.)

Things just kept rolling along and the Web simply expanded by the day.

But wait...

Microsoft. Bill Gates?

Where was Microsoft during all of this?

It’s hard for people to find out for sure why they were so obviously absent during the Web’s birth but I once read a biography on Bill Gates a few years ago. The author claimed that Microsoft didn’t even have an Internet server on their facilities until 1993. They got it because Bill’s 2nd in command had gotten flack from a bunch of customers who were complaining the Microsoft products were glaringly missing TCP/IP. This big boss said, "What the heck is that?" and had a server installed finally so his people could figure it out and get this TCP/IP thing into their products and the customers off his back.

Then one day in the spring of 1994 one of Bill Gate’s very trusted advisors told him of this thing called Mosaic he had seen a crowd of excited students using at Cornell University.

Something snapped Bill out of his daze. He suddenly found himself face-to-face with a vision of the future that many others before him had already pictured but being in a more, shall I say, strategic position, he was able to act on his a little more easily and fully. He frantically sent his entire company into a pedal-to-the-metal frenzy for the next year or more. They wrote and rewrote all the Microsoft products to incorporate the Internet into them. They came up with a fresh looking, Internet minded graphical interface operating system.

Microsoft didn’t have a browser so they did the most time efficient thing. They bought one. They got the abandoned Mosaic from a company who ended up with it called Spyglass. They worked it over and repackaged it as the new Internet Explorer 1.0. It was given away for free everywhere they could think of.

Andreesen had awakened the 1000 pound gorilla that was sleeping in the middle of the rug in the livingroom.

After a wild string of months, in August 1995 Microsoft launched its new 'Windows 95' operating system (which came with a browser and email/newsgroup software as well). It was a huge, expensive marketing campaign that flooded the media and greatly hinted at the marvels awaiting people on the Internet . Bill would make speeches telling the media how computers would become such integral parts of our lives and how those computers who be seamlessly attached to this Internet. The benefits to people would be great. (The benefits weren't too shabby for Microsoft either).

Media hype ensued and the message was spread. It was because of this sudden, unexpected surge of computer mania that most of the population got the impression that the Internet also was a 'new' thing, not knowing it's long, torrid past.

So that’s pretty much it.

People told 2 friends, and they told 2 friends, and they told 2 friends and so on, and so on, and so on…

The Internet and Web continued to expand and become more and more diverse, refined and sophisticated. It’s creeping into our cellphones, home phone systems, the cable TV systems… Who knows where it'll end up?

Anyway, now we're here.